Listen

Generative work

Below are examples of work created in collaboration with generative models. In each case the relationship to the work was different from anything else in this portfolio. Depending on the project I acted as director, creative partner, or a lead collaborator working alongside another mind.

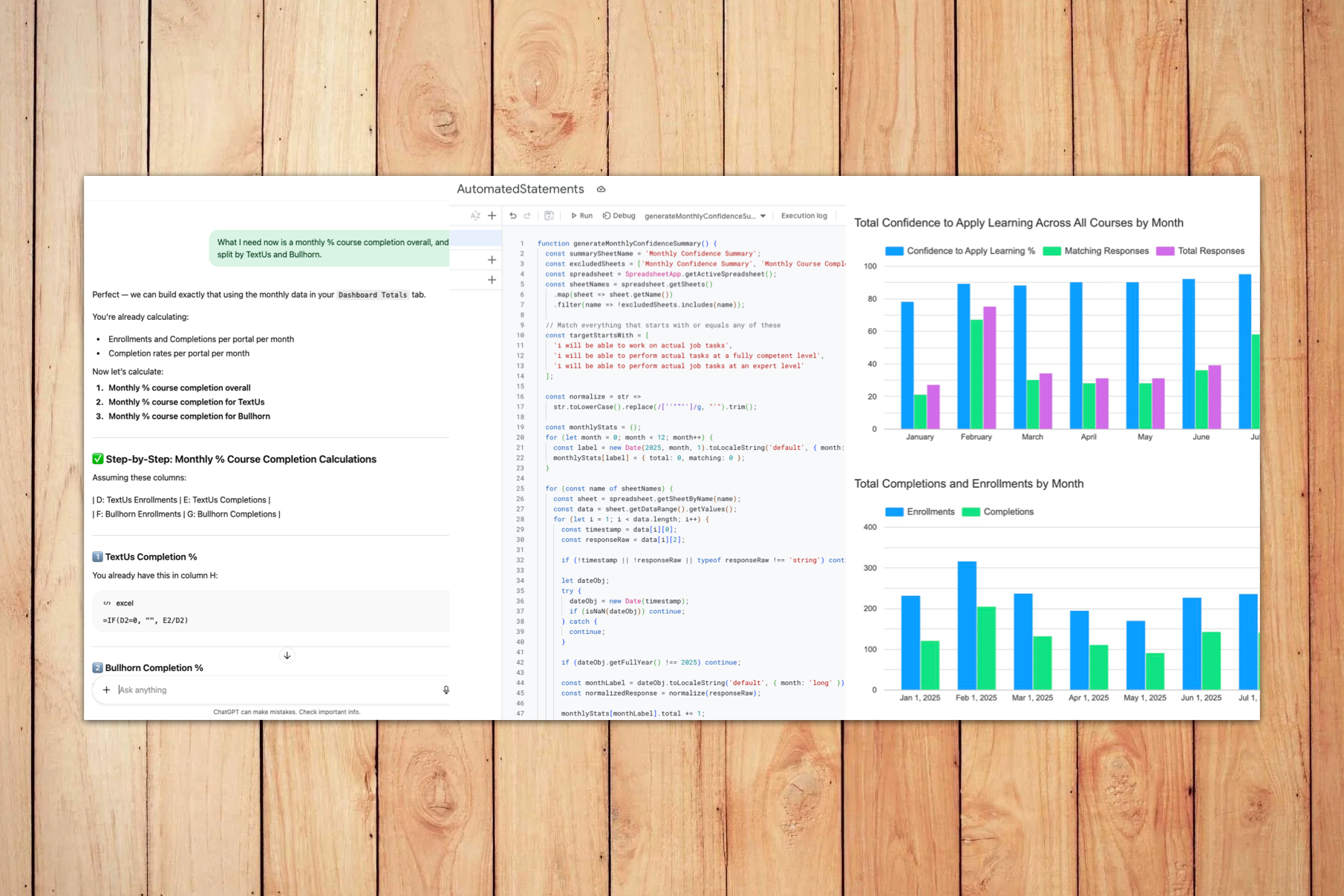

The first example is a live Learning Dashboard built in Looker Studio. For years I had wanted automated learning data reporting. At Slack and Sprout Social, developers built it for me. At TextUs, there was no bandwidth. So I partnered with ChatGPT to design the data architecture and generate the script that made it real. Thinkific API data flows into Google Sheets, Apps Script handles the integration, and Looker Studio pulls it all together into a dashboard that updates automatically every day. Leadership noticed. The project has since grown into a larger data warehouse initiative connecting course completions to actual product behavior.

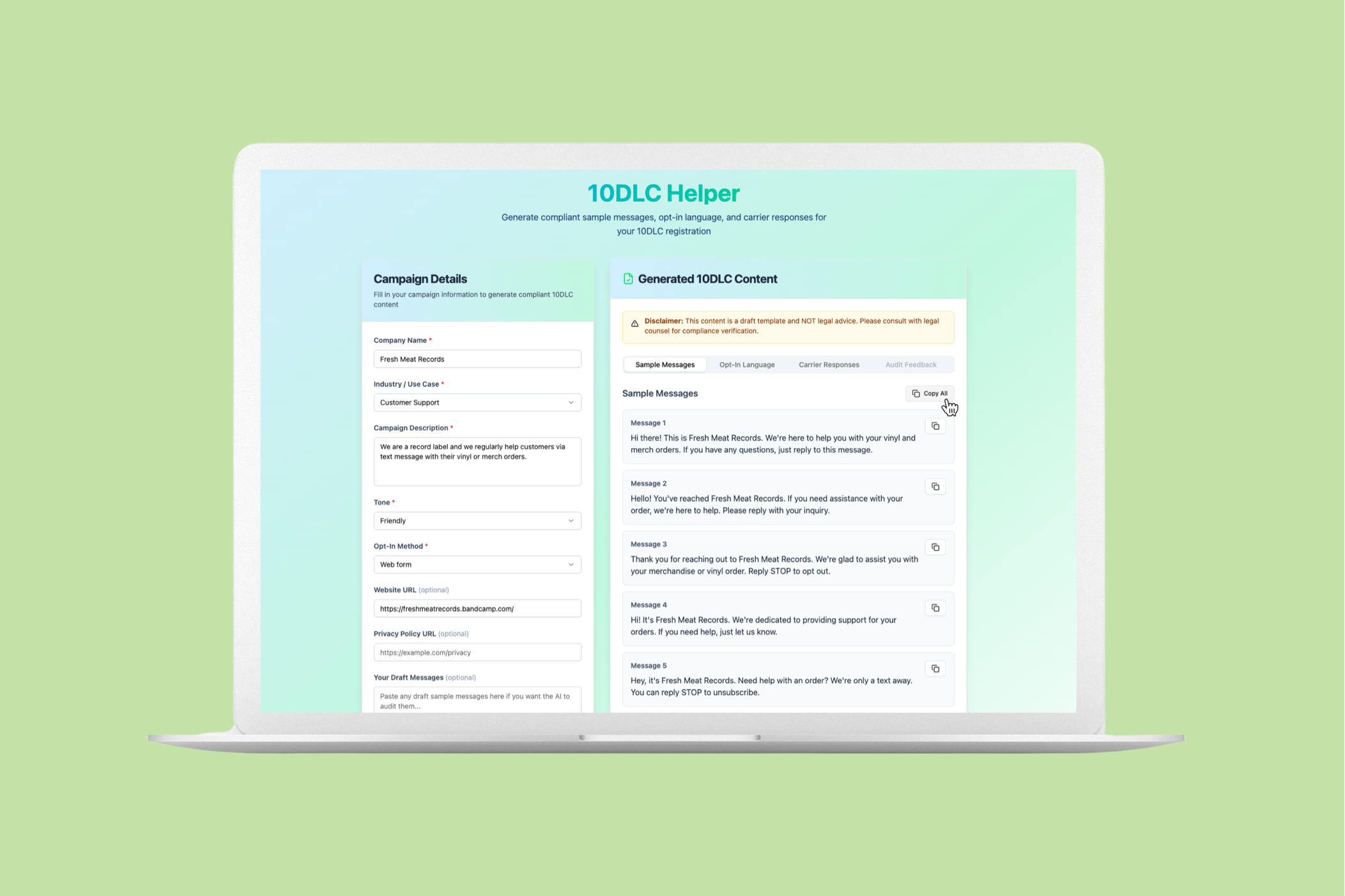

The second example is the 10DLC Helper, an interactive compliance tool built using Base44's vibe coding platform. Rather than create a video or a guide explaining 10DLC SMS registration requirements to new customers, I built an app that walks users through the process and generates the content they need to complete registration. This is what I think of as agentic learning: instead of consuming knowledge, the learner uses a smart tool and walks away with a real output.

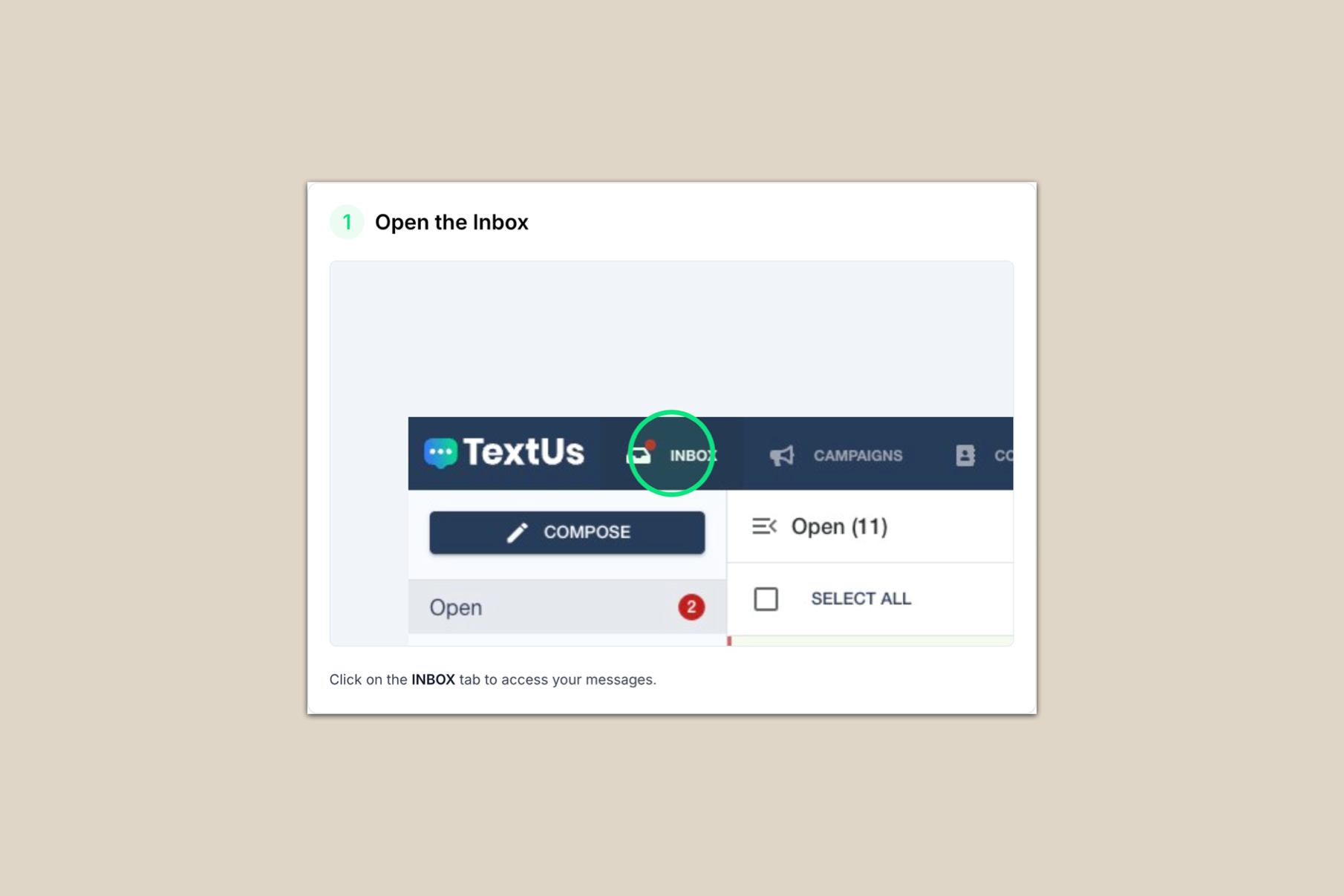

The third example is a how-to guide published in the TextUs Learning Center, generated using GuideMaker. What would have taken 30 to 45 minutes to write, format, and structure by hand was ready in under two minutes. The guide serves as a step-by-step procedure complementing a walkthrough video earlier in the course. It's a quieter example than the others here, but an honest one: sometimes the most useful thing generative tools do is simply give you time back.

The fourth example is a short video produced using Sora, ElevenLabs, and Camtasia. The twist is the voice. After hundreds of professional voiceover recordings over the years, I decided to clone my own voice using ElevenLabs rather than reach for a stock AI voice. I fed the model about 45 minutes of audio and six hours later had a working clone. The video pairs that voice with Sora-generated footage. When your customers and team already prefer your voice, maybe the most human choice is to replicate it rather than replace it.